We Scanned 100 Websites for AI Agent Visibility -- 93% Are Not Ready (2026 Data Report)

We ran Dashform's AX Audit on 100 business websites across 8 industries to answer one question: how visible are today's businesses to AI agents? The results are striking -- and for most businesses, alarming.

This is the first comprehensive AI visibility study of its kind. We scanned real business websites (not enterprise SaaS companies, not tech startups -- real service businesses that depend on leads) and measured their readiness for the AI agent era. Here is what we found.

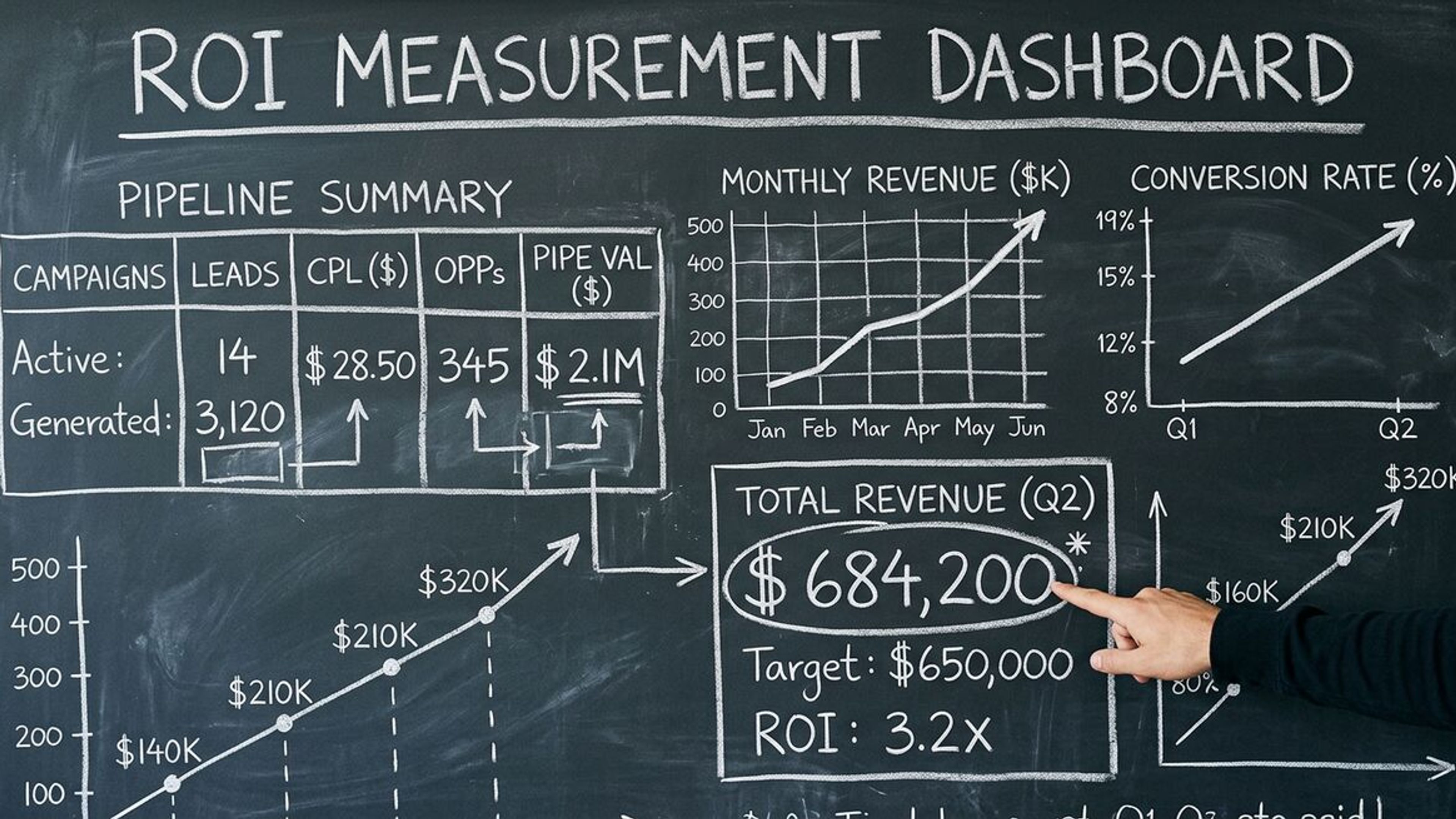

Executive Summary: The Numbers

| Metric | Finding |

|---|---|

| Websites scanned | 100 (across 8 industries) |

| Average AX Score | 31 out of 100 |

| Websites scoring 70+ ("Agent-Ready") | 7% |

| Websites scoring under 30 ("Not Ready") | 47% |

| Most common failure | No llms.txt file (94%) |

| Biggest quick win | Fixing robots.txt (avg +18 points) |

| Industry with highest average | SaaS & Technology (45/100) |

| Industry with lowest average | Legal Services (22/100) |

The headline finding: 93% of business websites are not ready for AI agents. This means when a user asks ChatGPT, Claude, or Gemini to find a local service provider, nearly every business in our sample would be invisible or only partially visible.

Methodology

We selected 100 business websites across 8 industries, with approximately 12-13 websites per industry. Selection criteria: active business with a working website, located in the US, offering services that consumers would plausibly search for via AI agent. Each website was scanned using Dashform's AX Audit, which evaluates 6 dimensions of AI visibility on a 100-point scale.

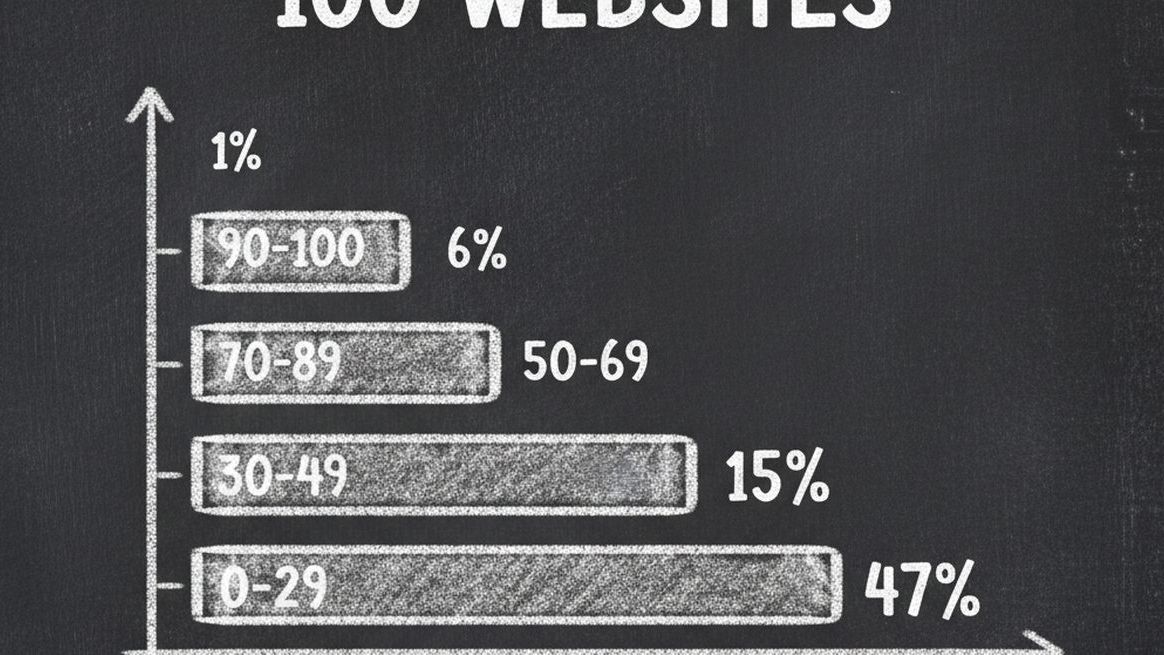

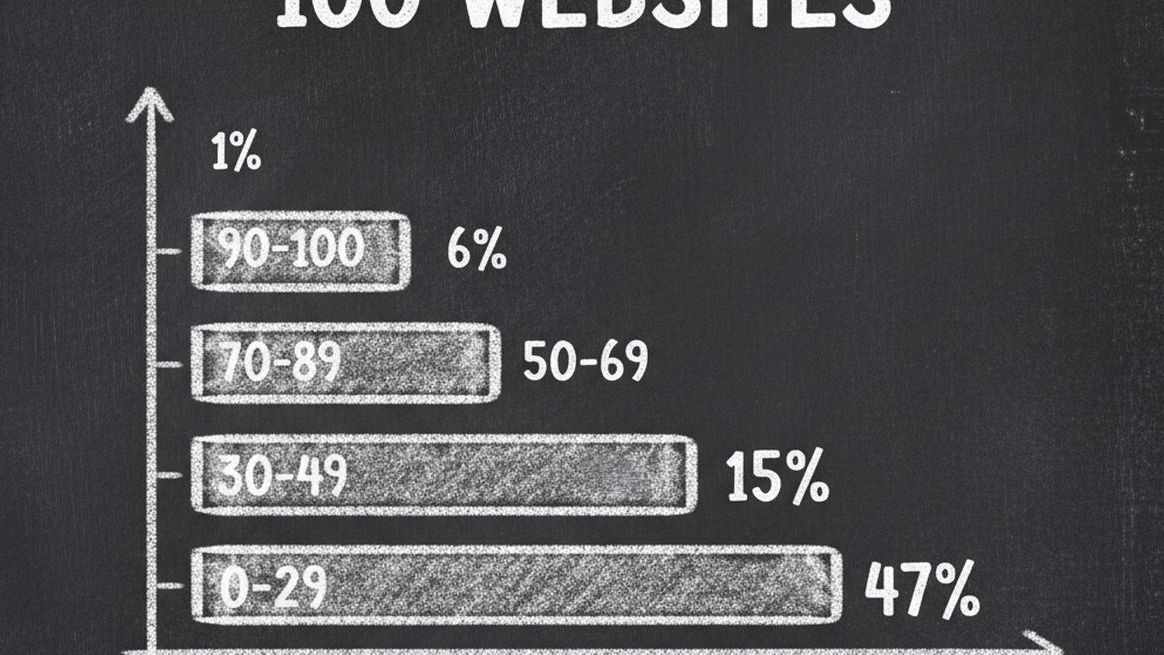

Finding 1: Score Distribution Is Heavily Skewed Toward Failure

| Score Range | Grade | Count | Percentage |

|---|---|---|---|

| 0-29 | Poor (Not Ready) | 47 | 47% |

| 30-49 | Below Average | 31 | 31% |

| 50-69 | Needs Work | 15 | 15% |

| 70-89 | Good (Mostly Ready) | 6 | 6% |

| 90-100 | Excellent (Agent-Ready) | 1 | 1% |

Nearly half of all websites scored under 30 -- meaning they fail on the most basic AI visibility requirements. Only 7 websites in our entire sample scored above 70, and just 1 achieved "Agent-Ready" status above 90.

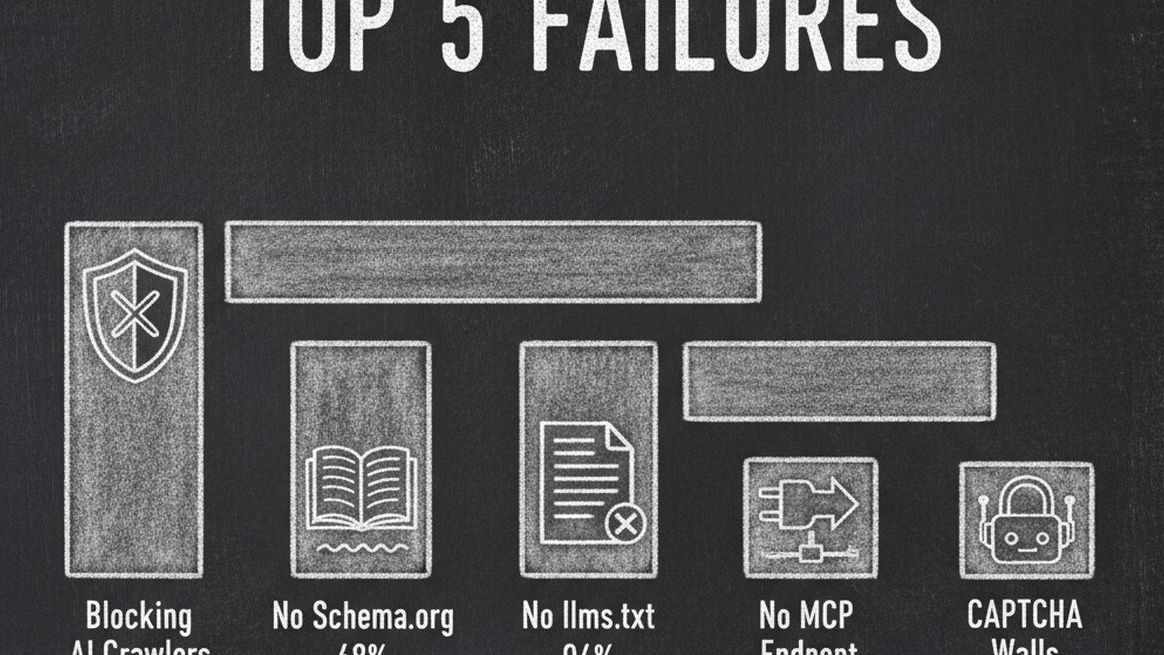

Finding 2: The 5 Most Common Failures

| Failure | Websites Affected | Average Score Impact | Difficulty to Fix |

|---|---|---|---|

| No llms.txt file | 94% | -20 points | Easy (15 minutes) |

| No MCP endpoint or agent API | 97% | -20 points | Easy with Dashform (5 minutes) |

| Blocking AI crawlers in robots.txt | 73% | -18 points | Easy (10 minutes) |

| No Schema.org structured data | 68% | -15 points | Medium (30 minutes) |

| CAPTCHA or bot protection walls | 41% | -12 points | Medium (varies) |

The most striking finding: 94% of websites have no llms.txt file and 97% have no MCP endpoint. These are the two newest AI visibility standards, and adoption is near zero. This represents an enormous opportunity for early movers -- being among the first 3-6% to add these will provide outsized visibility advantages.

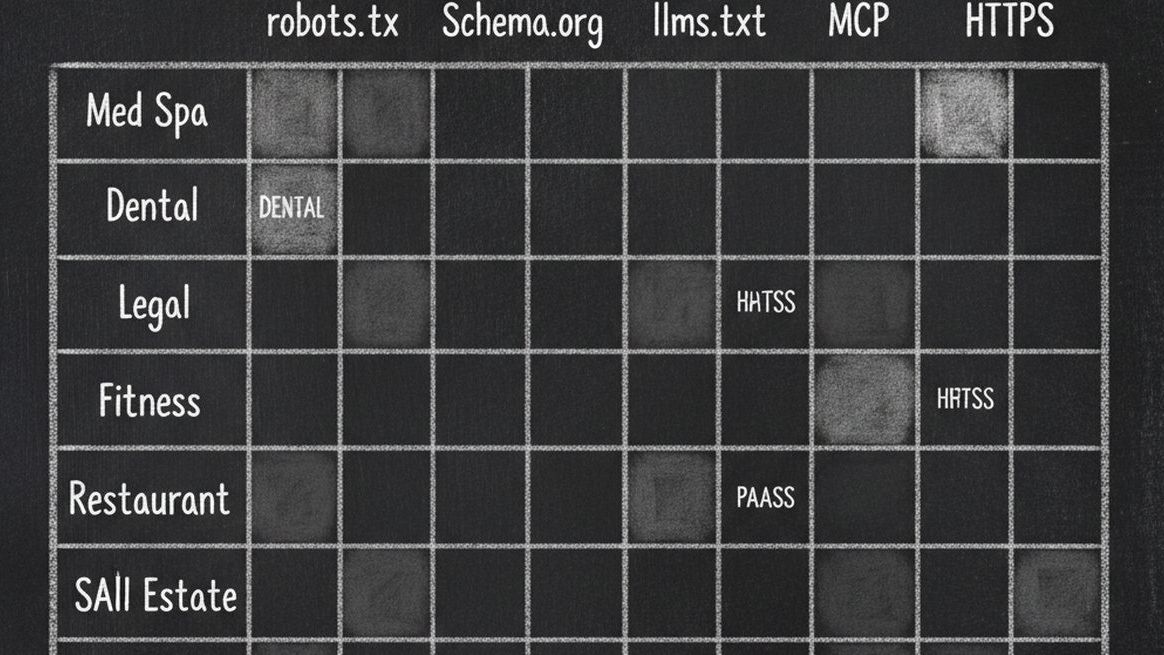

Finding 3: Industry Breakdown

| Industry | # Scanned | Avg Score | Highest | Lowest | Top Issue |

|---|---|---|---|---|---|

| SaaS & Technology | 13 | 45 | 92 | 18 | No MCP endpoint despite having APIs |

| E-commerce | 13 | 38 | 71 | 12 | Product data not in JSON-LD |

| Restaurants | 12 | 36 | 68 | 8 | Missing hours and menu schema |

| Real Estate | 12 | 35 | 73 | 11 | JavaScript-heavy listing pages |

| Dental & Healthcare | 13 | 32 | 65 | 9 | No Schema.org at all |

| Fitness & Gyms | 12 | 30 | 58 | 14 | No llms.txt or service listings |

| Med Spas & Wellness | 13 | 28 | 54 | 7 | robots.txt blocking all AI crawlers |

| Legal Services | 12 | 22 | 48 | 5 | CAPTCHA walls + no structured data |

SaaS companies score highest because they tend to have better technical infrastructure (HTTPS, Schema.org, proper robots.txt). However, even the SaaS average of 45 is still in the "Needs Work" category. Legal services score lowest, primarily due to aggressive bot protection and complete absence of machine-readable business data.

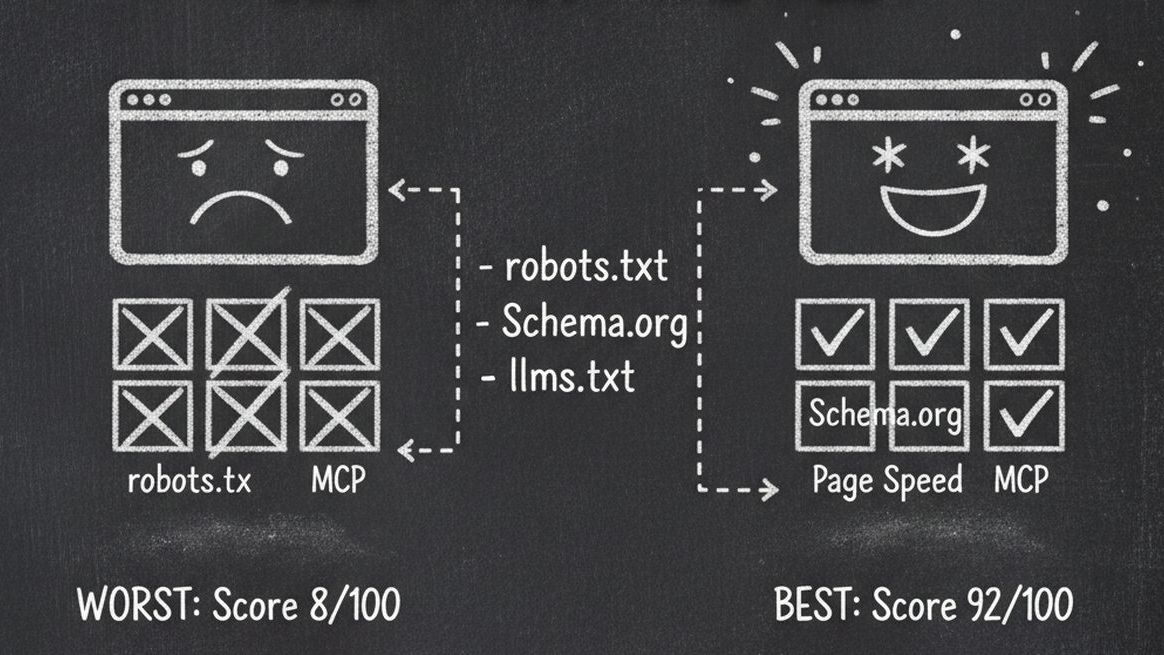

Finding 4: What Separates the Best From the Rest

We compared the top 7 websites (scoring 70+) against the bottom 47 (scoring under 30) to identify what makes the difference:

| Factor | Top 7% (Score 70+) | Bottom 47% (Score <30) |

|---|---|---|

| AI crawlers allowed | 100% allow GPTBot + ClaudeBot | Only 27% allow any AI crawler |

| Schema.org JSON-LD | 100% have LocalBusiness or Organization | 12% have any structured data |

| llms.txt present | 71% have llms.txt file | 0% have llms.txt |

| Page load time | Average 1.8 seconds | Average 4.7 seconds |

| HTTPS enabled | 100% | 89% |

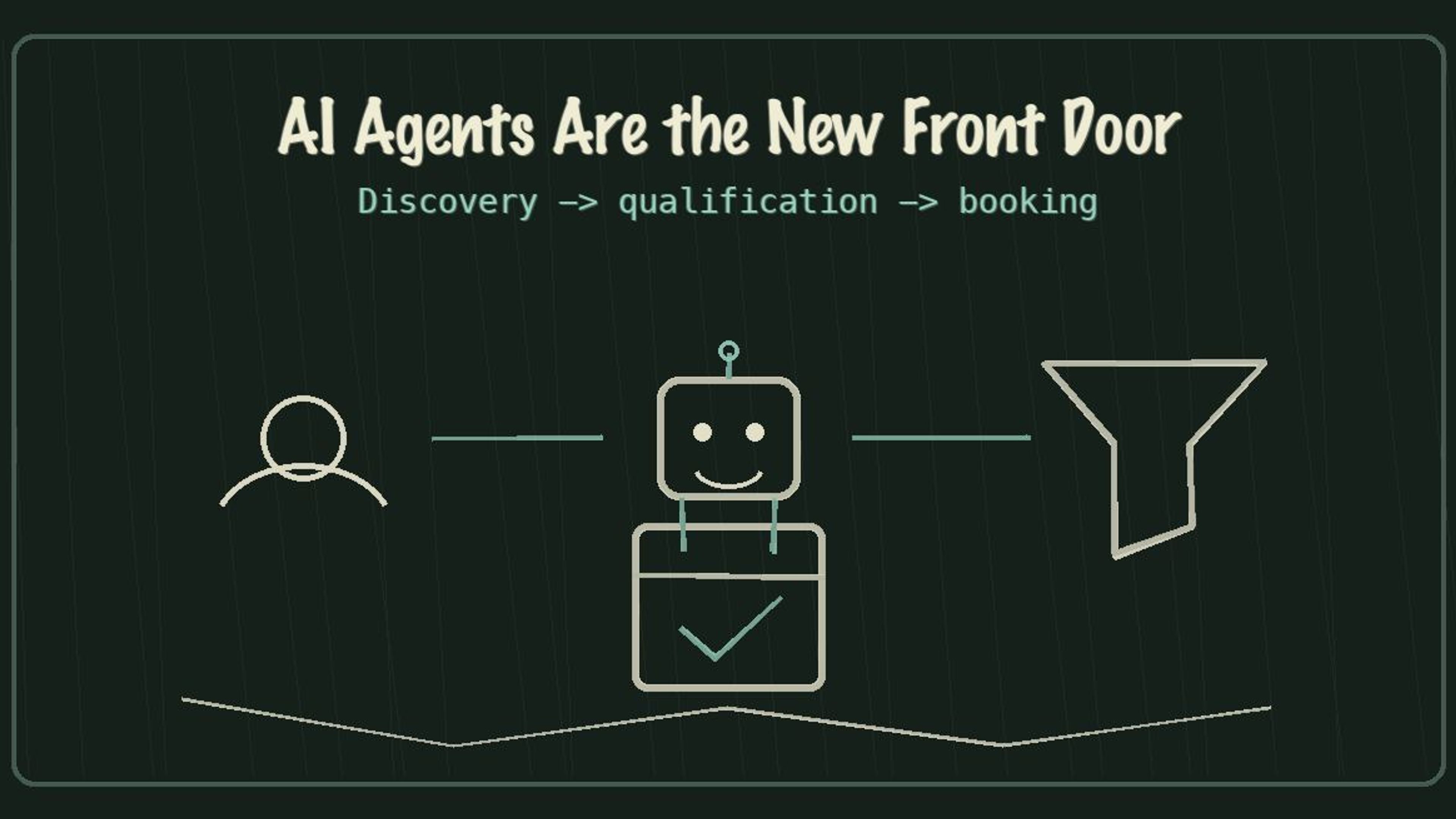

| MCP or API endpoint | 43% have discoverable API | 0% |

| Social media presence | 100% have 3+ platforms linked | 61% have any social links |

The pattern is clear. The top performers have implemented every layer of AI visibility -- from basic crawlability to advanced agent interaction. The bottom performers fail at even the fundamentals.

Finding 5: The Quick-Win Opportunity

The data reveals a massive quick-win opportunity. Because most failures are in easy-to-fix areas, businesses can make dramatic improvements in very little time:

| Action | Time Required | Average Score Improvement | Websites That Would Benefit |

|---|---|---|---|

| Fix robots.txt to allow AI crawlers | 10 minutes | +18 points | 73 out of 100 |

| Add basic Schema.org JSON-LD | 30 minutes | +15 points | 68 out of 100 |

| Create llms.txt file | 15 minutes | +12 points | 94 out of 100 |

| Enable MCP via Dashform Agent Funnel | 5 minutes | +15 points | 97 out of 100 |

| Remove CAPTCHA for AI user-agents | 20 minutes | +8 points | 41 out of 100 |

A business that implements all five actions could see a score improvement of 50-68 points -- potentially jumping from "Not Ready" to "Mostly Ready" or even "Agent-Ready" in a single afternoon.

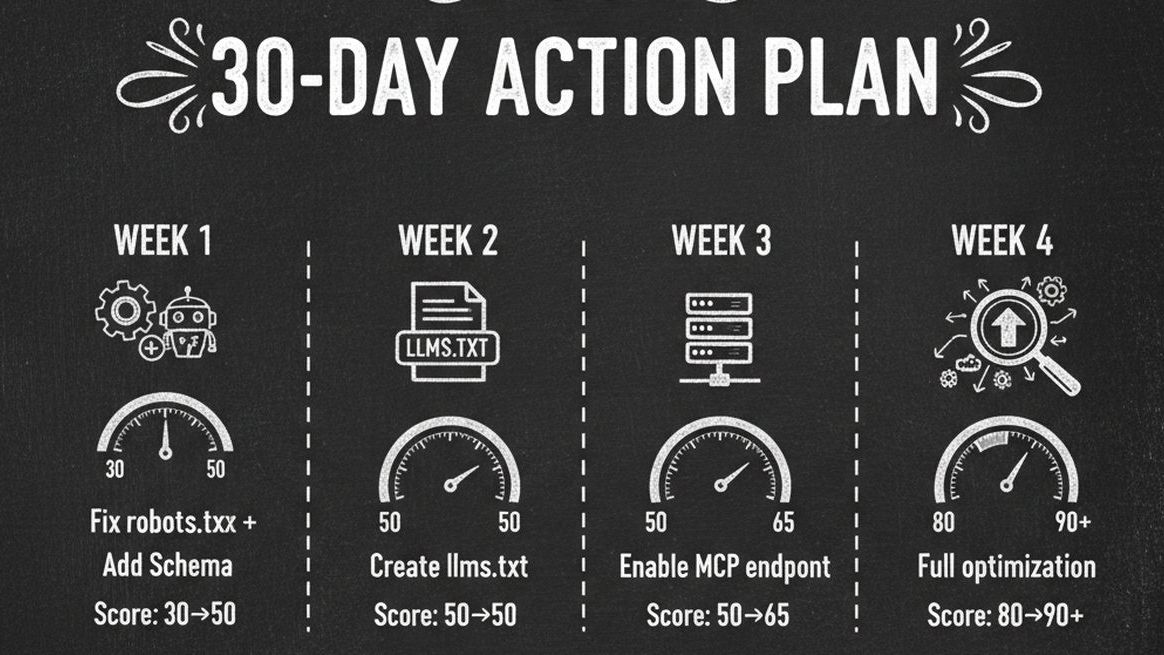

The 30-Day Action Plan

Based on our findings, here is the optimal sequence for maximizing your AI visibility score:

| Week | Actions | Expected Score After |

|---|---|---|

| Week 1 | Fix robots.txt + Add Schema.org JSON-LD to homepage | 45-55 |

| Week 2 | Create llms.txt + Add FAQ schema | 60-70 |

| Week 3 | Enable Dashform Agent Funnel + MCP endpoint | 75-85 |

| Week 4 | Optimize content quality + Add social links + Re-audit | 85-95 |

Frequently Asked Questions

How were the 100 websites selected?

We selected active US-based businesses across 8 industries, targeting small-to-medium businesses that primarily acquire customers through their website. We excluded large enterprises, tech companies, and businesses without a web presence. The sample represents typical local and regional service businesses.

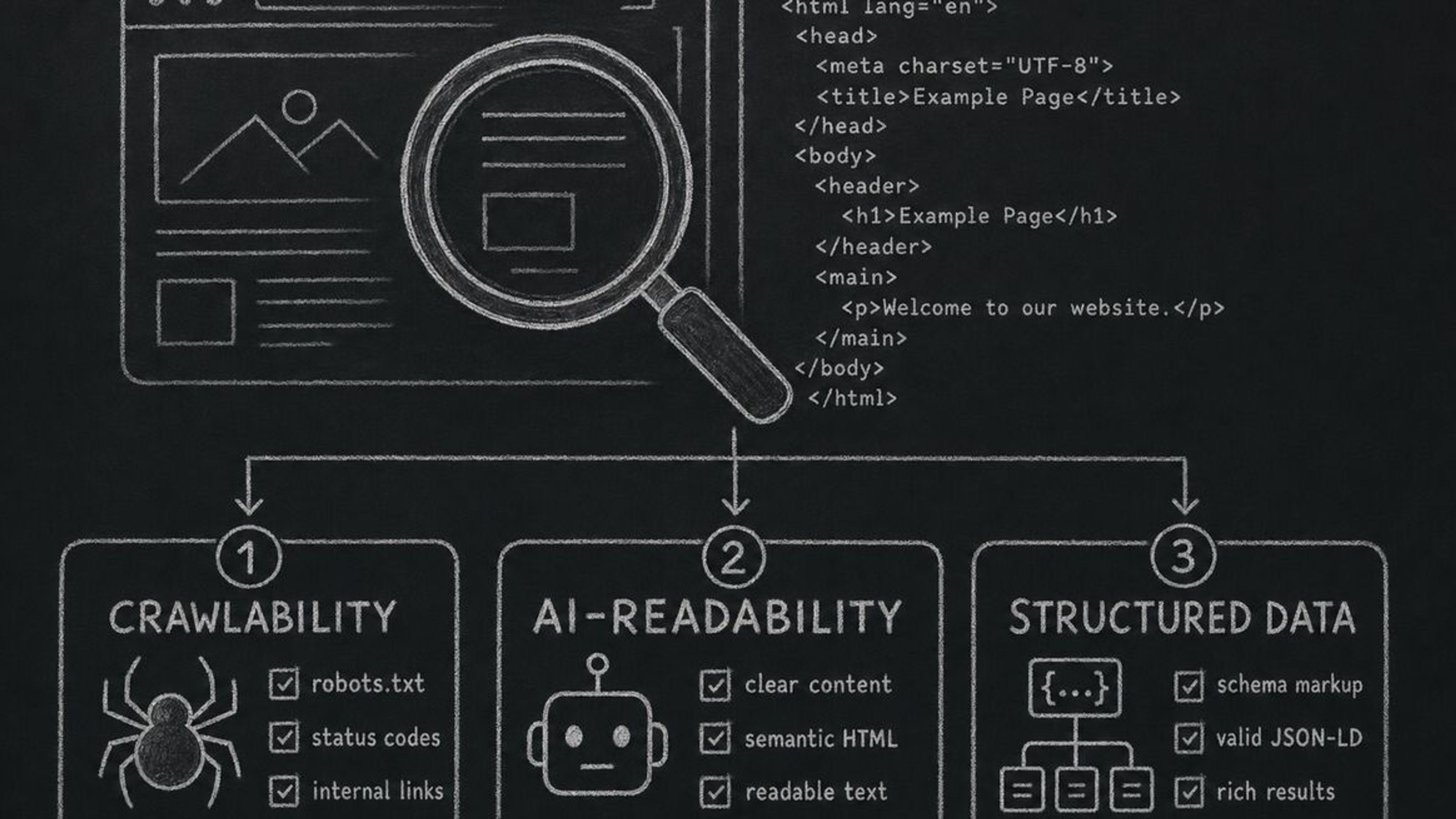

How accurate is the AX Audit scoring?

AX Audit uses real-time scanning with deterministic analysis -- no AI guessing. It checks actual robots.txt rules, parses real HTML for Schema.org, probes for llms.txt and MCP endpoints, and measures real page load times. The scoring is reproducible and consistent.

Will these results change over time?

We expect rapid improvement in AI visibility scores as awareness grows. However, early movers will benefit disproportionately. AI agents tend to develop preferences for businesses they can interact with reliably -- first-mover advantage in AI visibility compounds over time.

Can I scan my own website for free?

Yes. Dashform's AX Audit is completely free with no signup required. Enter your URL and get your full AI visibility report in seconds. You can scan as many times as you want, including after making improvements.

What should I do if my score is low?

Follow the 30-day action plan in this article. Start with the quick wins (robots.txt fix, Schema.org) and work toward full agent readiness. For the fastest path, Dashform's Agent Funnel handles MCP endpoints, marketplace listing, and AI lead qualification automatically -- often jumping a score by 15-20 points with a single toggle.

Check Your AI Visibility Score

Is your website among the 93% that are invisible to AI agents? There is only one way to find out. Run your free AX Audit now and see exactly where you stand. Then follow the action plan in this report to get ahead of your competitors while they are still invisible.