The March 2026 AI Model War: GPT-5.4 vs Claude vs Gemini for Forms and Quizzes

The March 2026 AI Model War: GPT-5.4 vs Claude vs Gemini for Forms and Quizzes

March 2026 marks a turning point in the AI race. OpenAI released GPT-5.4 with native computer use and a 1 million token context window. Anthropic's Claude 3.7 Opus continues to lead in reasoning and safety benchmarks. Google's Gemini 2.5 Pro dominates multimodal tasks with native audio and video understanding. For businesses that use AI to power forms, quizzes, surveys, and lead qualification, the question is no longer whether to use AI. It is which AI to use.

This breakdown compares all three models across the metrics that actually matter for business applications: accuracy, cost, speed, capability, and real-world performance with forms and interactive content.

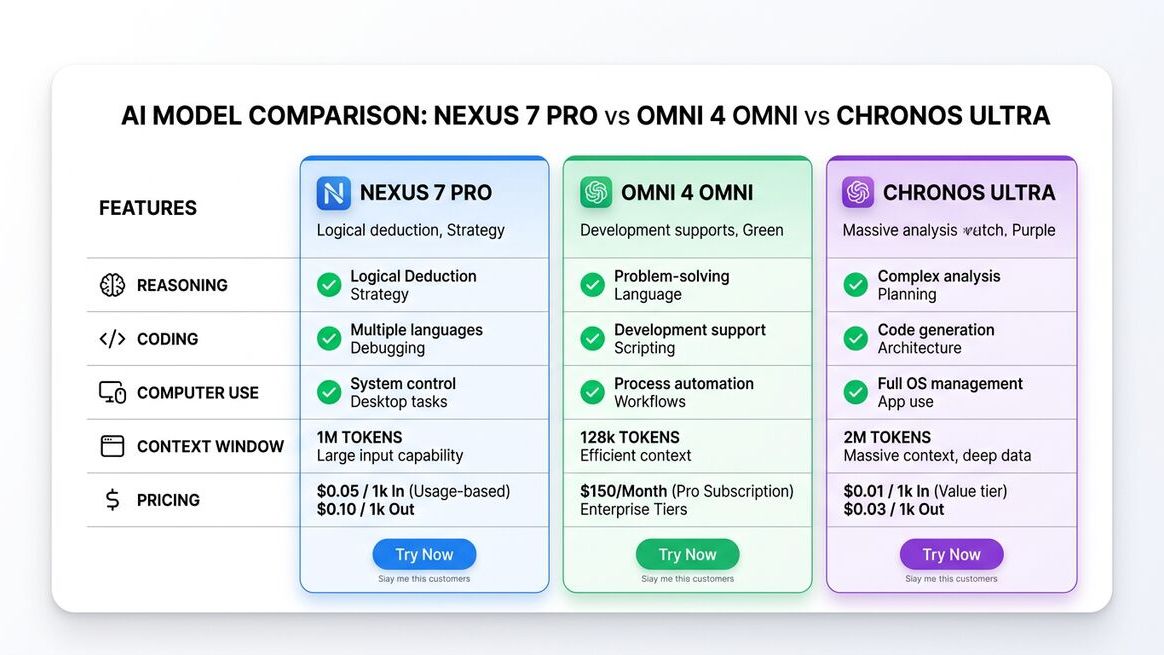

GPT-5.4 vs Claude 3.7 Opus vs Gemini 2.5 Pro: Head-to-Head Comparison

| Feature | GPT-5.4 (OpenAI) | Claude 3.7 Opus (Anthropic) | Gemini 2.5 Pro (Google) |

|---|---|---|---|

| Release Date | March 5, 2026 | February 2026 | January 2026 |

| Context Window | 1M tokens | 500K tokens | 2M tokens |

| Computer Use | Native (75% OSWorld) | API-based | Limited preview |

| MMLU Score | 92.3% | 91.8% | 90.5% |

| Coding (HumanEval) | 94.1% | 95.2% | 89.7% |

| GDPval (Real-World) | 83% | 79% | 76% |

| Hallucination Rate | -33% vs GPT-5 | Lowest in class | -20% vs Gemini 2.0 |

| Input Cost / 1M tokens | $2.50 | $15.00 | $1.25 |

| Output Cost / 1M tokens | $15.00 | $75.00 | $5.00 |

| Best For | Computer use, business automation | Complex reasoning, safety-critical | Multimodal, high volume |

GPT-5.4: The Action-Taker

OpenAI's strategy with GPT-5.4 is clear: make AI that does things, not just says things. The native computer use capability is the headline feature, but several under-the-radar improvements matter more for form and quiz builders:

- Tool Search reduces token usage by 47%, which means complex multi-tool workflows cost nearly half as much to run.

- The 1 million token context window means AI can analyze entire form submission histories, customer databases, and qualification criteria in a single prompt.

- 33% fewer hallucinations than GPT-5 makes it reliable enough for customer-facing form responses and lead qualification decisions.

- API pricing at $2.50 per million input tokens makes it the best value for medium-complexity tasks.

For businesses using AI-powered quiz funnels, GPT-5.4 excels at generating personalized results based on quiz responses. Its computer use capability also enables end-to-end automation: from the moment a lead completes a quiz to the moment they are booked on your calendar.

Claude 3.7 Opus: The Reasoning Expert

Anthropic's Claude 3.7 Opus takes a different approach. Where GPT-5.4 focuses on action, Claude focuses on understanding. It leads all models in several critical areas:

- Highest coding benchmark scores (95.2% HumanEval) make it the best choice for building custom form logic and integrations.

- Industry-leading safety alignment means it produces the most reliable, least biased content for sensitive applications like healthcare intake forms, legal questionnaires, and financial assessments.

- Extended thinking mode lets it work through complex multi-step reasoning, which is ideal for qualification scoring that involves multiple criteria and nuanced decisions.

- 500K token context window handles most business use cases comfortably.

The trade-off is cost. At $15 per million input tokens, Claude 3.7 Opus is 6x more expensive than GPT-5.4 for input processing. For high-volume form processing, this adds up quickly. However, for complex qualification logic where accuracy is worth the premium, Claude often delivers better results.

Gemini 2.5 Pro: The Volume Player

Google's Gemini 2.5 Pro wins on two fronts: context window size and cost. With a 2 million token context window and the lowest pricing in the market at $1.25 per million input tokens, it is purpose-built for high-volume processing.

Where Gemini shines for form builders:

- Largest context window (2M tokens) can process massive datasets, entire customer databases, or analyze thousands of form submissions in a single pass.

- Native multimodal understanding means it can process form submissions that include images, audio recordings, and video. Think photo upload forms, voice surveys, and video testimonials.

- Lowest cost makes it ideal for high-volume, lower-complexity tasks like basic form generation, simple survey analysis, and bulk data processing.

- Deep Google ecosystem integration benefits businesses already on Google Workspace.

The weakness is in complex reasoning and action. Gemini trails GPT-5.4 in real-world task completion (GDPval) and does not match Claude's reasoning depth for nuanced qualification decisions.

Which Model Wins for Specific Use Cases?

Best AI Model by Use Case

| Use Case | Best Model | Why |

|---|---|---|

| Quiz funnel generation | GPT-5.4 | Best balance of quality, cost, and automation |

| Complex lead qualification | Claude 3.7 Opus | Superior reasoning for multi-criteria scoring |

| High-volume survey processing | Gemini 2.5 Pro | Lowest cost, largest context window |

| Client onboarding automation | GPT-5.4 | Computer use handles multi-tool workflows |

| Healthcare/legal intake forms | Claude 3.7 Opus | Best safety alignment and accuracy |

| Multimodal forms (photo/video) | Gemini 2.5 Pro | Native image/video understanding |

| CRM data entry automation | GPT-5.4 | Computer use navigates CRM interfaces |

| Form building and customization | Claude 3.7 Opus | Highest coding benchmark scores |

| Budget-constrained projects | Gemini 2.5 Pro | 50% cheaper than GPT-5.4 for input |

The Multi-Model Strategy: Why You Do Not Have to Choose Just One

The smartest businesses in 2026 are not picking one model. They are using multiple models for different tasks. A practical multi-model form and quiz stack looks like this: Use GPT-5.4 for computer use automation and end-to-end funnel management. Use Claude 3.7 Opus for complex qualification logic and safety-critical content generation. Use Gemini 2.5 Pro for high-volume processing and multimodal form analysis. Dashform is designed to work with multiple AI models, letting you choose the best engine for each form type and use case.

This approach optimizes for cost, accuracy, and capability simultaneously. You get GPT-5.4's automation, Claude's reasoning, and Gemini's volume pricing, each where they perform best.

What This Means for Form and Quiz Builders

The AI model war is good news for anyone building forms, quizzes, and interactive content. Competition is driving down prices, pushing up capabilities, and creating specialized strengths you can leverage:

- Form generation quality improves with every model update. AI-generated forms now match or exceed human-designed forms in completion rates.

- Qualification accuracy keeps climbing. The gap between AI-scored and human-scored leads shrinks with each model generation.

- Automation depth expands. GPT-5.4 computer use means you can automate workflows that were impossible to automate before.

- Costs keep falling. The average cost of AI-powered form processing has dropped 70% in the past 12 months.

If you are still using static forms without AI, you are leaving money on the table. AI-powered quiz generators convert 3-5x more visitors than traditional forms, and the cost of running them drops with every model release.

Frequently Asked Questions

Which AI model is cheapest for form processing?

Gemini 2.5 Pro is the cheapest at $1.25 per million input tokens, followed by GPT-5.4 at $2.50, and Claude 3.7 Opus at $15.00. For most form processing tasks, GPT-5.4 offers the best value when you factor in quality and capability. For pure bulk processing, Gemini wins on cost.

Can I switch between AI models without rebuilding my forms?

Yes, if you use a platform like Dashform that supports multiple AI backends. Forms and quiz logic remain the same; only the AI engine processing the data changes. This lets you test different models and optimize for your specific use case.

Is GPT-5.4 computer use available through APIs?

Yes. OpenAI offers computer use through the GPT-5.4 API with specific endpoints for screen interaction, keyboard and mouse control, and multi-step task execution. Third-party platforms are rapidly integrating these capabilities into their products.

Which model hallucinates the least?

Claude 3.7 Opus has the lowest hallucination rate overall, followed by GPT-5.4 which reduced hallucinations by 33% compared to GPT-5. For business-critical applications where accuracy matters most, Claude is the safest choice. GPT-5.4 is reliable enough for most commercial applications.

Will these models keep getting cheaper?

Yes. AI model pricing has dropped 60-80% year over year since 2024, and competition between OpenAI, Anthropic, and Google ensures this trend continues. GPT-5.4 is already 29% cheaper per token than GPT-5. Expect another significant price drop by mid-2026.

The Verdict

There is no single best AI model in March 2026. GPT-5.4 wins for automation and action. Claude 3.7 Opus wins for reasoning and safety. Gemini 2.5 Pro wins for volume and cost. The real advantage goes to businesses that use each model where it performs best. Start with AI-powered forms and quizzes that leverage the right model for each job, and watch your conversion rates climb while your costs drop.